Here is a text I’ve written some time ago :

While browsing on gaming development sites, forums and chats I encountered a widespread statement : “premature optimization is a root of all evil” (or similar formulation).

Even though it may be true in some extreme cases, I think that overusage of this phrase started causing more troubles, than the optimization itself. It encourages beginners to skip planning and architecture.

It is important to think through every part of the code and see the big picture. Not to code the first possible solution of a problem. Otherwise you’ll be building on top of weak foundations and you’ll run into some serious problems later. And it is increasingly difficult to correct and refactor those foundations. Therefor it’s important to create solid and optimal solutions in the first place.

Let me tell you one example.

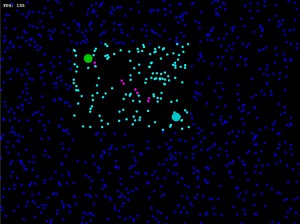

Imagine you are programming a space shooter game, with lot of ships, lasers and everything. You would like to incorporate a functionality, where a laser from one ship will collide with anything in it’s path. So what you need is an algorithm to detect collision between a line (laser beam) and a circle (representing bounding sphere of any object). You search on Google and find an algorithm. You may see the task as finished, but now is a good time to think about necessary optimizations.

Current PC is of course able to execute the algorithm in almost no time. So you may think no optimization is needed. I’d like to prove the contrary.

The first step is to understand how many times the algorithm may be called per second. Let’s see.

Common game engine may be updated 60 times per second. That means 60 steps are done each second. The whole world is updated and all calculations are done 60x per second. That gives us 1000/60 = 16 miliseconds for one update. So you need to get all calculations for one step into the 16ms timeframe.

Now let’s think of number of ships in the game. It may be safe to asume that it may be 30 ships on the map at one time. Each of them may carry 3 lasers. So in one step, you may need to check collision between a laser and ship for 90 lasers and 30 objects. That gives us 2700 algorithm calls per update. So it is very important to think of some reasonable maximum of objects in your game and determine the time required to do the calculations for all those objects. In our example I assumed dozens of ships with some lasers each. And it gives us roughly thousands of algorithm calls.

Now how fast do you need those thousand calls to finish ? We have 16ms for each update. But that is the MAXIMUM time and it is for ALL calculations within this update. Not only for some line/circle intersection checks. So we may say that you need the several thousands calls to finish within 1 ms. But that’s in worst case scenario. Ideally you are aiming for 100 microseconds or less.

To summarize – you need the algorithm to be fast enough to finish thousands of iterations within 100 micro seconds. That means tens of millions calls per one second. And now you probably start to understand why optimization is important. The basic principles of game engine are cutting your available time to a fraction of second and just a small number (30) of objects is giving you thousands of necessary calls.

Try iterate through your algorithm 10 000 times. And if it takes more time than 100microseconds, you should probably think about some optimization. Another thing to consider is the speed of target platform. Players PCs may vary in power in one order of magnitude. Some other platforms (tablets, cellphones) even more.

What will happen if you believe that premature optimization is useless ? You will end up with a game where suddenly some situations are very slow. You’ll try to find out why. And after some difficult profilling you’ll see, that several key components of your engine need 1-5ms to finish. Putting it all together it grows beyond your 16ms timeframe. And you will have to get back to those algorithms. Some of the bottlenecks you’ll never find, because separatelly they are not suspicious and very greedy, but together they all add up.

Some of them you’ll be able to optimize, but it will be more difficult, because it’s been some time and you don’t have it fresh in mind. Also there is a lot of stuff build on top of that, which means more refactoring.

Some of them however, you’ll find impossible to optimize well enough. There simply may not exist an effective algorithm to do that fast enough for given number of objects. And you’ll realize you built a lot of stuff on top of something, that will never work. And instead of knowing at the beginning, you only find that out now. It’s like building a bridge, when after you’ve built half of it, you realize the material is not strong enough to support a bridge that long.

Great, I simply love this game, its a shame that FinalWar2 does not work on new computers, If you need some game testing, I will be glad to help out.

It is problematic. However there are some tricks how to get it running. No guarantees, but : 1) try to set resolution of your display to 1024*768 (native game resolution) 2) try to switch off all other applications (browsers, IMs, winamp…) 3) try windows compatibility modes.